Overview

Scorecard’s MCP (Model Context Protocol) server lets you manage projects, create testsets, configure metrics, run evaluations, and analyze results through natural language in any MCP-compatible client.Available Tools

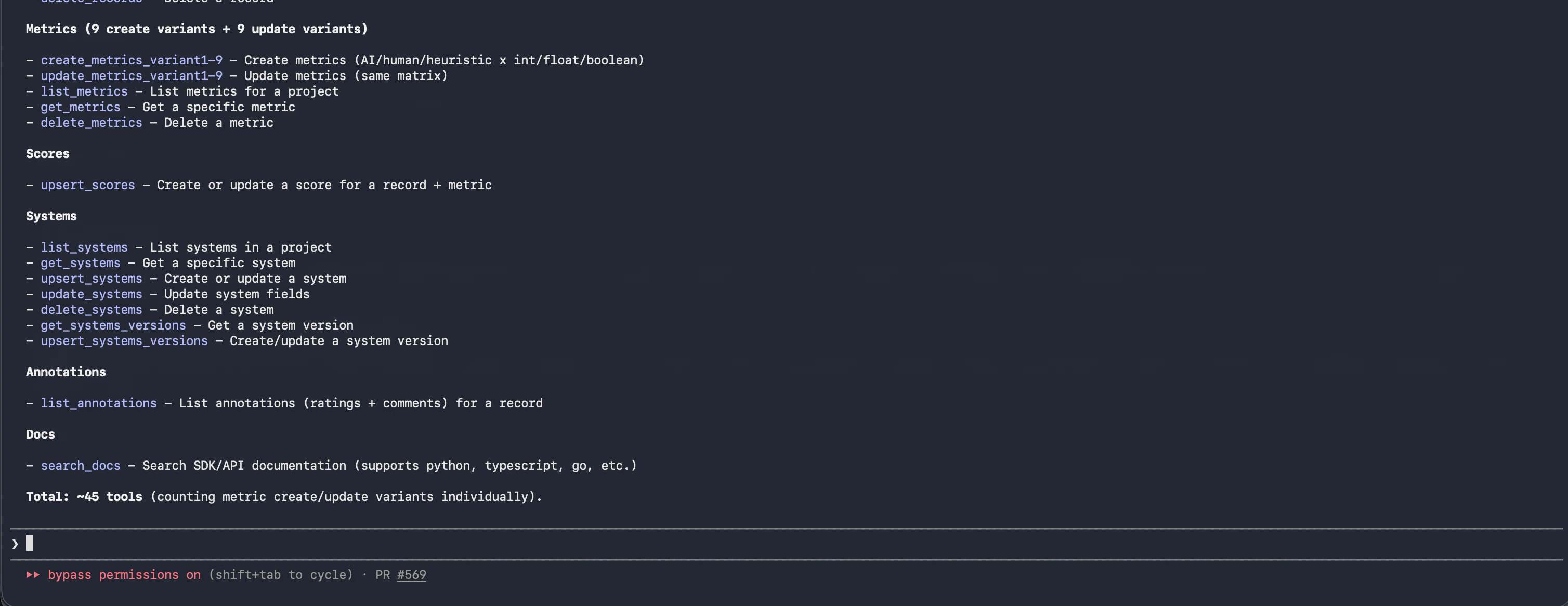

The MCP server exposes ~45 tools covering metrics, scores, systems, annotations, and documentation search.

Setting Up the MCP Server

Claude Code

Add the Scorecard remote MCP server with a single command:scorecard: https://mcp.scorecard.io/mcp (HTTP) - ✓ Connected.

Claude Desktop

Go to Claude Desktop settings and click the “Connectors” tab. Click “Add custom connector” and paste the URL:https://mcp.scorecard.io/mcp. Click “Add”, then “Connect” to login to Scorecard.

Local configuration

You can run the MCP server locally via npx:Examples

Create a project and testset

Create metrics

Analyze results

Generate testcases from a codebase

In Claude Code, you can combine file access with the MCP server:Iterate on metrics

Technical Details

- Built on the Model Context Protocol standard

- Compatible with any MCP client (Claude Code, Claude Desktop, Cursor, and more)

- Secured with OAuth authentication

- Open source: github.com/scorecard-ai/scorecard-mcp